Two cool things coming up:

Global Physics Department

I should have blogged about this earlier. Every Wednesday at 9:30 Eastern, there is an elluminate session to discuss various topics in physics education. There have been slightly more than 20 participants each week. I've got dinner/family time then so I can never make it live. Luckily, all the sessions are recorded.

Next week (May 18) Brian Frank will be discussing how to build on student misconceptions (His title: The wrong ideas I love my students to have, and the right ideas I worry about). He's got a bunch of really good posts on misconceptions. In this post, Brian links a John Clement paper on bridging misconceptions that is FANTASTIC.

Normally you can just show up. But the following week SEAN (OMG I'm geeking out!) CARROLL is going to host it. Due to space limitations, you'll need to register here.

The perma-link for the elluminate sessions are http://tinyurl.com/RundquistOfficeHours

EdCampSFBay

When: Saturday, August 20 8-4

Where: Skyline High School, Oakland

If you don't know what an EdCamp is, hit the link and watch the video.

You can follow @EdCampSFBay for updates.

I'm definitely planning to be there so say hello if you see me. And no, I don't plan to run a session.

Friday, May 13, 2011

Tuesday, May 10, 2011

Formulating Measures

Back to assessment! Not technically research so it's not getting the edresearch tag.

I found this via Sarah Cannon who blogs at Sarah's Development. The full paper was written by Judah Schwartz and can be found on his old Harvard page. This work came from the Balanced Assessment in Mathematics project.

The sample prompt is really all you need to figure out what's going on here:

If you can't read the questions, they are:

On page 10, the author lists the proposed components in formulating a measure.

Other than the very obvious ones (formulating a measure for speed or for density) I fully admit to slacking on this.

1: Did you start with (Horizontal/Vertical) with "most square" being closest to 1 as your first measure of square-ness? I did. But Schwartz pointed out that the measure then changes if you rotate the figure 90 degrees. Should this occur for a measure of square-ness? Great great GREAT conversation fodder.

I found this via Sarah Cannon who blogs at Sarah's Development. The full paper was written by Judah Schwartz and can be found on his old Harvard page. This work came from the Balanced Assessment in Mathematics project.

The sample prompt is really all you need to figure out what's going on here:

If you can't read the questions, they are:

- Which of the rectangles is the "squarest"?

- Arrange the rectangles in order of "square-ness" from most to least square.

- Devise a measure of "square-ness," expressed algebraically, that allows you to order any collection of rectangles in order of "squareness."

- Devise a second measure of "square-ness" and discuss the advantages and disadvantages of each of your measures.1

I love these -ness problems. There is a ton of high level thinking here and the formulation of measures is the first step in model building. One of my ongoing struggles is helping my students become more precise with their language. What does, ".....works better," mean?

I know the paper says, "Please do not quote," at the top, but I'm going to ignore that:

I know the paper says, "Please do not quote," at the top, but I'm going to ignore that:

Formulating a measure requires one to be explicit about the constituent elements of data that one believes are important in a given situation. (p. 2)Standard measures included rates, ratios and area. Non-standard measures that were listed included crowded-ness, sharp-ness (as in curves), disc-ness (of cylinders) and developing a "size of task" measure given this data set.

On page 10, the author lists the proposed components in formulating a measure.

- Observing

- Comparing

- Ordering

- Making Measurements

- Analyzing Data

At the end of the paper (starting on page 11), the author goes on to differentiate between measures and models (models having the ability to predict in untried instances and being falsifiable).

1: Did you start with (Horizontal/Vertical) with "most square" being closest to 1 as your first measure of square-ness? I did. But Schwartz pointed out that the measure then changes if you rotate the figure 90 degrees. Should this occur for a measure of square-ness? Great great GREAT conversation fodder.

Wednesday, May 4, 2011

Ed Research: From Studying Examples to Solving Problems

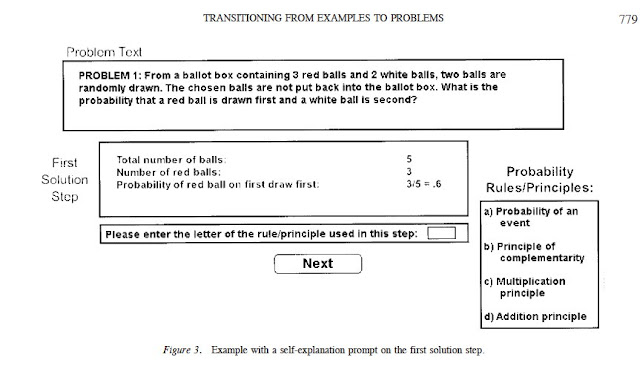

Atkinson, Renkl, Merrill: Transitioning From Studying Examples to Solving Problems: Effects of Self-Explanation Prompts and Fading Worked-Out Steps [pdf] [edit:fixed link]

I'm sharing this paper for two reasons. First, the suggested modifications require close to zero added instructional time and energy. Always a win. Second, it is a good illustration of one of the reasons I read so much research. I don't consider myself an instinctive teacher. Many of you will read this and be like, "Well.....duh." Me? Not so much. I have to put deliberate effort into improving what I do and stuff like this isn't as obvious to me (until I read it) as I'd like it to be.

Summary: The researchers combined worked examples with self-explanation prompts at each step to help facilitate far transfer.

Worked examples are something that most teachers use. They've got a low instructional cost, both in terms of time and energy. When students are working on solving problems in class, I will tape a few answer keys will the problems worked out around the room so they can check when they're done or when they're stuck. It's effective. The problem is that worked examples, while very good for near transfer (problems that are similar to the example) have been pretty disastrous for far transfer. Which totally makes sense since usually students are learning steps rather than engaging in the problem.

The researchers did two specific things to improve upon the standard work example.

Fading: The steps in the worked examples would be removed in reverse order. So the last step would be removed, followed by the second to last step, and so forth until the student did not need the examples anymore. Makes total sense but not something I normally do. My problem solving scaffolds go from the full structure (entire worked example) to being completely removed (solving independently). In an earlier study, one of the authors (Renkl) found that this worked well for near transfer but not for far. This study in the paper was designed to help remediate this problem.

Self-explanation prompts: In addition to the backward fading procedure, the authors added self-explanation prompts to each step. These were computer based examples so those prompts consisted of selecting a rule/principle from a multiple-choice set. Here's a pic from the paper:

Adding this prompt increased both near transfer and far transfer when compared with just backward fading.

There's an interesting discussion at the end of the paper about cognitive load. Previous researchers found mixed results for similar treatments. The authors speculated that cognitive load may be an issue. In one study, the examples were spread out over multiple pages and in another participants were asked to type in the principles rather than just selecting them.

Cognitive load theory is interesting but often misapplied. But that's a post for another day.

I'm sharing this paper for two reasons. First, the suggested modifications require close to zero added instructional time and energy. Always a win. Second, it is a good illustration of one of the reasons I read so much research. I don't consider myself an instinctive teacher. Many of you will read this and be like, "Well.....duh." Me? Not so much. I have to put deliberate effort into improving what I do and stuff like this isn't as obvious to me (until I read it) as I'd like it to be.

Summary: The researchers combined worked examples with self-explanation prompts at each step to help facilitate far transfer.

Worked examples are something that most teachers use. They've got a low instructional cost, both in terms of time and energy. When students are working on solving problems in class, I will tape a few answer keys will the problems worked out around the room so they can check when they're done or when they're stuck. It's effective. The problem is that worked examples, while very good for near transfer (problems that are similar to the example) have been pretty disastrous for far transfer. Which totally makes sense since usually students are learning steps rather than engaging in the problem.

The researchers did two specific things to improve upon the standard work example.

Fading: The steps in the worked examples would be removed in reverse order. So the last step would be removed, followed by the second to last step, and so forth until the student did not need the examples anymore. Makes total sense but not something I normally do. My problem solving scaffolds go from the full structure (entire worked example) to being completely removed (solving independently). In an earlier study, one of the authors (Renkl) found that this worked well for near transfer but not for far. This study in the paper was designed to help remediate this problem.

Self-explanation prompts: In addition to the backward fading procedure, the authors added self-explanation prompts to each step. These were computer based examples so those prompts consisted of selecting a rule/principle from a multiple-choice set. Here's a pic from the paper:

Adding this prompt increased both near transfer and far transfer when compared with just backward fading.

There's an interesting discussion at the end of the paper about cognitive load. Previous researchers found mixed results for similar treatments. The authors speculated that cognitive load may be an issue. In one study, the examples were spread out over multiple pages and in another participants were asked to type in the principles rather than just selecting them.

Cognitive load theory is interesting but often misapplied. But that's a post for another day.

Sunday, May 1, 2011

Ed Research: IMPROVE

I'm shelving the Hattie post for now. I can't do it without it turning into a 3-part series on meta-analysis. I'm hoping if I walk away from it for a little bit I'll be able to turn it into something more concise (and coherent).

Until then, I'm changing it up. I read a lot of educational research. At least, a lot for a teacher who isn't enrolled in any grad school program. I'm going to start sharing some research I've already been incorporating and later move on to just things I've found interesting but haven't figured out how to implement.

First up: IMPROVE: A Multidimensional Method for Teaching Mathematics in Heterogeneous Classrooms

IMPROVE is an acronym created by Zemira Mevarech and Bracha Kramarski out of Bar-Ilan University in Israel and describes the method they devised for mathematics instruction. It's based on three principles: metacognitive training, learning in heterogeneous cooperative grouping, and provision of feedback-corrective enrichment.

IMPROVE stands for:

Introducing the new concepts

Metacognitive questioning

Practicing

Reviewing and reducing difficulties

Obtaining mastery

Verification

Enrichment

Sounds cheesy. I know. But I'm only going to focus on one part—metacognitive questioning. The authors drew from Polya for these but I like this structure a bit more .

For the study, Mevarech and Kramarski designed a series of questioning cards. I picture them as index cards with questions written on them but I don't have any actual examples. The questions were first used when the teacher was modeling problem solving for the class. In groups, students then took turns solving problems while answering the questions. The teacher would also circulate the class and take a turn at each group (solving problem and answering the questions along the way). Students wouldn't move on to the next problem until they reached a consensus based on discussion.

There were three categories.

Comprehension Questions - Students were asked to reflect on the problem first. They read it out loud, described the concept in their own words, and what type of problem it was.

Examples: What's the problem asking? What is it giving you? What type of problem is it? What are the essential features?

Connection Questions - Students focus on the similarities and differences between the current problem and the previous problem or problem set.

Examples: How are....and....similar? How are....and....different?

Strategic Questions - Students were asked what strategy they selected to solve the problem and for what reason.

Examples: What strategy is most appropriate? Why is this strategy most appropriate? How can the suggested plan be carried out?

The authors state that, "...students were introduced during the year to a large repertoire of strategies from which they had to select the appropriate one...." Examples given were constructing a table, drawing a diagram, and selecting the appropriate formula.

I'd love to see the selection of strategies but unfortunately they were not included.

I also have Reflection Questions (How do you know it's right? How could you have solved it differently?). I'm pretty sure the authors added this in future versions of IMPROVE but I could just be making it up.

I've been using these questions for three years now. I like having frameworks for my students to work within and if I just said, "Talk about science," I'd get many conversations about the last Chivas game and very little actual science. I don't use cards. I just put problems on the left side of a paper and leave a column on the right side for students to fill in with the answers to the metacognitive questions. They don't do them every time, just when they're working on something new. I also use them when students are planning labs.

On my part, I model them when demonstrating something and try to reference them as explicitly as possible when I'm prompting students who are stuck.

I've been happy with it although I need to work harder at having the students generate and refer back to a list of strategies.

Addendum: Talking with @park_star on Twitter she mentioned that this is how teachers in her ed program taught problem solving. So if you're in Canada, nothing new I guess. Also, I forgot to mention is that after I've worked a bit at building the habit, I've found that it's something I should only push when it's truly problem solving. The students need to be working on something difficult so that these metacognitive questions are actually useful. Otherwise it becomes just another task that's forced on them and is the metacognitive equivalent of showing your work.

Until then, I'm changing it up. I read a lot of educational research. At least, a lot for a teacher who isn't enrolled in any grad school program. I'm going to start sharing some research I've already been incorporating and later move on to just things I've found interesting but haven't figured out how to implement.

First up: IMPROVE: A Multidimensional Method for Teaching Mathematics in Heterogeneous Classrooms

IMPROVE is an acronym created by Zemira Mevarech and Bracha Kramarski out of Bar-Ilan University in Israel and describes the method they devised for mathematics instruction. It's based on three principles: metacognitive training, learning in heterogeneous cooperative grouping, and provision of feedback-corrective enrichment.

IMPROVE stands for:

Introducing the new concepts

Metacognitive questioning

Practicing

Reviewing and reducing difficulties

Obtaining mastery

Verification

Enrichment

Sounds cheesy. I know. But I'm only going to focus on one part—metacognitive questioning. The authors drew from Polya for these but I like this structure a bit more .

For the study, Mevarech and Kramarski designed a series of questioning cards. I picture them as index cards with questions written on them but I don't have any actual examples. The questions were first used when the teacher was modeling problem solving for the class. In groups, students then took turns solving problems while answering the questions. The teacher would also circulate the class and take a turn at each group (solving problem and answering the questions along the way). Students wouldn't move on to the next problem until they reached a consensus based on discussion.

There were three categories.

Comprehension Questions - Students were asked to reflect on the problem first. They read it out loud, described the concept in their own words, and what type of problem it was.

Examples: What's the problem asking? What is it giving you? What type of problem is it? What are the essential features?

Connection Questions - Students focus on the similarities and differences between the current problem and the previous problem or problem set.

Examples: How are....and....similar? How are....and....different?

Strategic Questions - Students were asked what strategy they selected to solve the problem and for what reason.

Examples: What strategy is most appropriate? Why is this strategy most appropriate? How can the suggested plan be carried out?

The authors state that, "...students were introduced during the year to a large repertoire of strategies from which they had to select the appropriate one...." Examples given were constructing a table, drawing a diagram, and selecting the appropriate formula.

I'd love to see the selection of strategies but unfortunately they were not included.

I also have Reflection Questions (How do you know it's right? How could you have solved it differently?). I'm pretty sure the authors added this in future versions of IMPROVE but I could just be making it up.

I've been using these questions for three years now. I like having frameworks for my students to work within and if I just said, "Talk about science," I'd get many conversations about the last Chivas game and very little actual science. I don't use cards. I just put problems on the left side of a paper and leave a column on the right side for students to fill in with the answers to the metacognitive questions. They don't do them every time, just when they're working on something new. I also use them when students are planning labs.

On my part, I model them when demonstrating something and try to reference them as explicitly as possible when I'm prompting students who are stuck.

I've been happy with it although I need to work harder at having the students generate and refer back to a list of strategies.

Addendum: Talking with @park_star on Twitter she mentioned that this is how teachers in her ed program taught problem solving. So if you're in Canada, nothing new I guess. Also, I forgot to mention is that after I've worked a bit at building the habit, I've found that it's something I should only push when it's truly problem solving. The students need to be working on something difficult so that these metacognitive questions are actually useful. Otherwise it becomes just another task that's forced on them and is the metacognitive equivalent of showing your work.

Subscribe to:

Posts (Atom)