One thing I'm really struggling with is the concept of "logic" or a conclusion following from its premises. It's hard for my students to understand what I mean by this and it's hard for me to explain in other terms. So far, it appears that their definition of "logical" is something along the lines of "familiar" or "what I was expecting." Any suggestions? How do you handle reasoning that is preposterous or that makes leaps of faith?This is definitely the hard part. I don't have any magic bullets, but I can tell you where I am right now.

1. Faulty reasoning is most often due to a lack of content knowledge. At the beginning and middle of a unit, this is expected. Honing reasoning as we go is one of our primary goals.

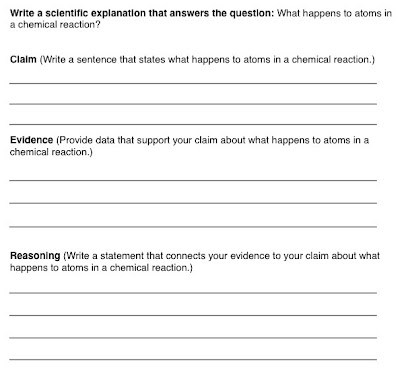

If things aren't going well though, my best advice would be to narrow the question. Broader questions are usually more complex. I started conservation of mass with, "What happens to atoms in a chemical reaction?" (Actually, the question was about why ice melts when you heat it but paper burns) and kids were flying all over the place. They generated their own claim and designed their own experiments and it was a disaster. There was no real way to differentiate through experiment most of their claims (atoms are being fused together, atoms are exploding, atoms turn into heat). I rebooted with whether atoms are destroyed or not in a chemical reaction and it worked much better. We all did a similar experiment and students were able to make a well-reasoned argument that atoms weren't destroyed.

The counterpoint is that I start other topics, like kinetic molecular theory, very broadly. I can't say for sure why some topics need to be narrower but it is some combination of how much background knowledge students come in with, how well our classroom experimentation can differentiate between competing claims, and how much time I'm willing to devote to testing competing ideas.

2. There are mechanical issues. For MS kids, sentence frames and starters go a long way. Be sure to fade them as you go. Another teacher at my school has a lot of success with sentence starters in group discourse. He posts them at his tables. Instead of CER, some teachers like C-ER-ER-ER where you give a reason after each piece of evidence.

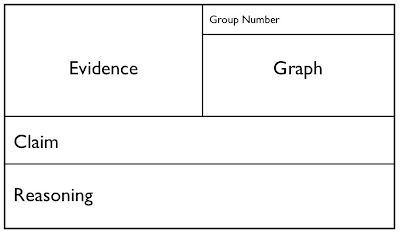

3. Ask students to explain ahead of time what different results would mean with regard to the claim. Before they could start the experiment to test if atoms are destroyed or not, my students needed to explain to me that if the bottle got lighter, it meant atoms are being destroyed. If it weighed the same, they weren't. If it weighed more, something else was going on that we couldn't explain (this is an important and often overlooked step). In essence, I was locking them in to a reasoning. Is that preventing them from doing any thinking? I say no because I'm still asking my students to reason, it's just the timing that changes. If I do it after, students more often construct weird explanations for the results and engage in all kinds of magical thinking and confirmation bias. This is assuming the purpose of a lab is to test a specific claim. Sometimes a lab is just to get ideas rolling in which case we do our reasoning after. [Edit: Have your students sketch a "prediction graph" on their whiteboards ahead of time.]

4. Distinguish between reasoning and introducing a new claim. It is hard for students to see when their reasoning is backing up a claim and when they're making a brand new assertion. I don't have a good method for this other than continually asking them to go back to what their claim actually predicts. It's also important to help them with the idea that a single experiment doesn't have to explain everything. We burn paper and weigh it and it weighs the same. That only tells us that the atoms aren't being destroyed. That means we often need to use multiple pieces of evidence to converge on a single, more complex, explanation.

5. Differentiate between logical/scientific reasoning and reasoning from evidence. I think this idea came from a paper by Deanna Kuhn2 but I'm not sure. The part I really latched onto was that students come into experiments in science class with the idea that something has to covary. We're measuring this and manipulating that so clearly there's a relationship between the two. Students aren't asked to actually look at the data created but simply to reason about the science involved. In hindsight, this is certainly true in most of my classes. I run some kind of amazing science lab where the null hypothesis is always rejected. My takeaway is that occasionally students need to be doing experiments where no relationship will actually be found. I've gotten better at this both in intentionally designing them in and allowing students to run an experiment that I know will result in no relationship. The first few attempts at this what do I find? Students can ALWAYS find a relationship. It's a long slow process of breaking this habit.

The other insight I got from that paper was that students don't really understand measurement uncertainty. I definitely find that to be true. I like Geoff's approach but I don't really do anything about this other than hate how I don't do anything about it.

This is getting long. I'm going to stop here. I've got two more posts on CER in my drafts but history has shown I'm awful at following up on promised posts.

-------

1: The flipside is the argument that more attention to persuasion will lead to better arguments. I'll write more on this next time. (EDIT: This footnote doesn't go anywhere but I'll keep it to remind me.)

2: I hadn't heard of the Education for Thinking Project until I googled her for a link. Looks right up my alley.